Nervous Systems

SAGE stored and tracked its aerial targets as coordinates in binary digits, recorded in the polarity of thousands of tiny, donut-shaped magnets in a three-dimensional grid of wiring—the antecedent of all real-time computer memory today. These recorded positions were connected to keyboards, displays, and teleprinters across miles of wiring and hundreds of tons of electronics. The only movement across the system was that of electrical signals. The 1950 report recommending the creation of SAGE took pains to explain the result not as an object, but as a “system . . . [like] the ‘nervous system’ . . . a structure composed of distinct parts so constituted that the functioning of the parts and their relations to one another is governed by their relation to the whole” (Hughes 1998, 21, quoting Air Defense Systems Engineering Committee 1950, 2–3).

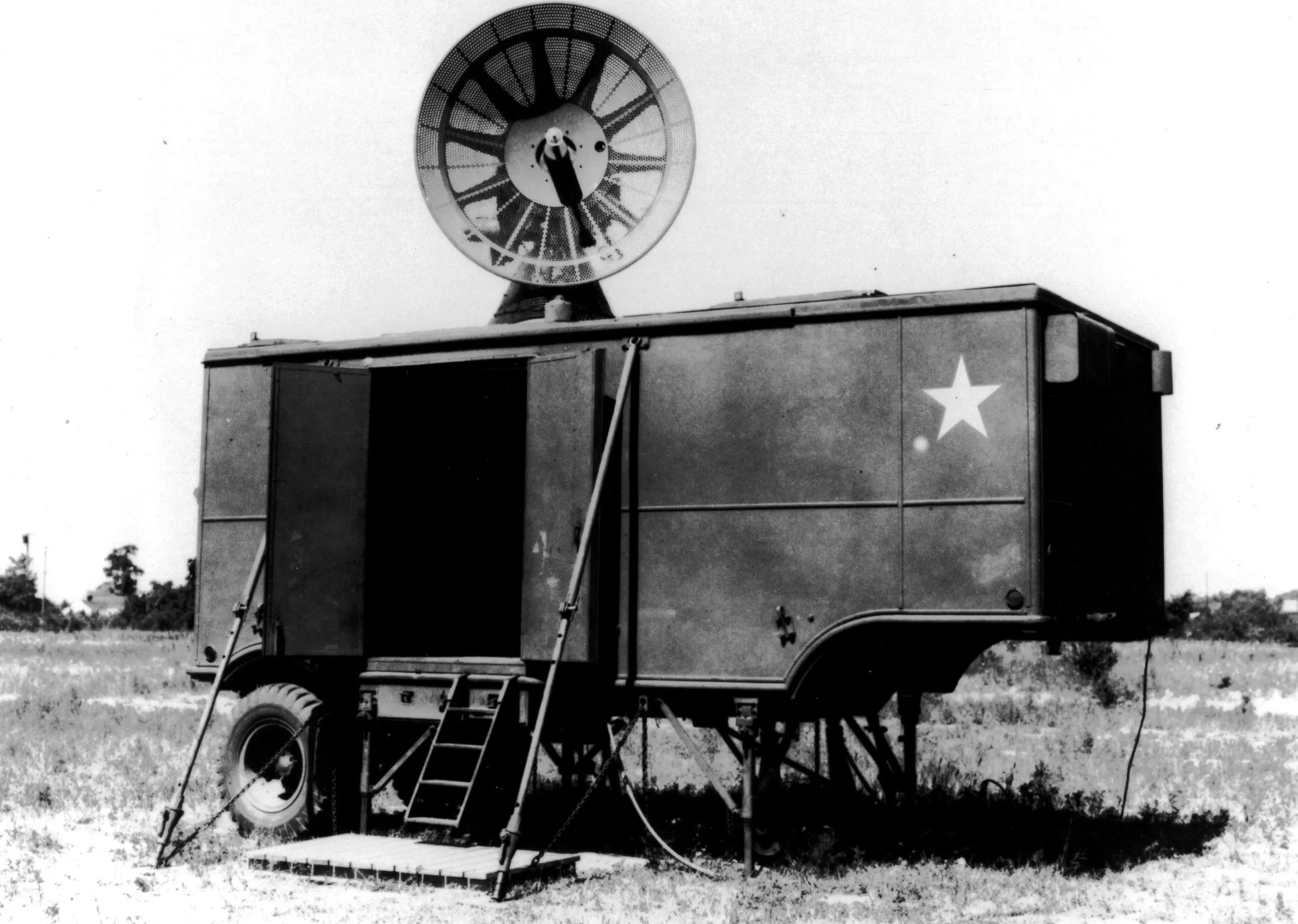

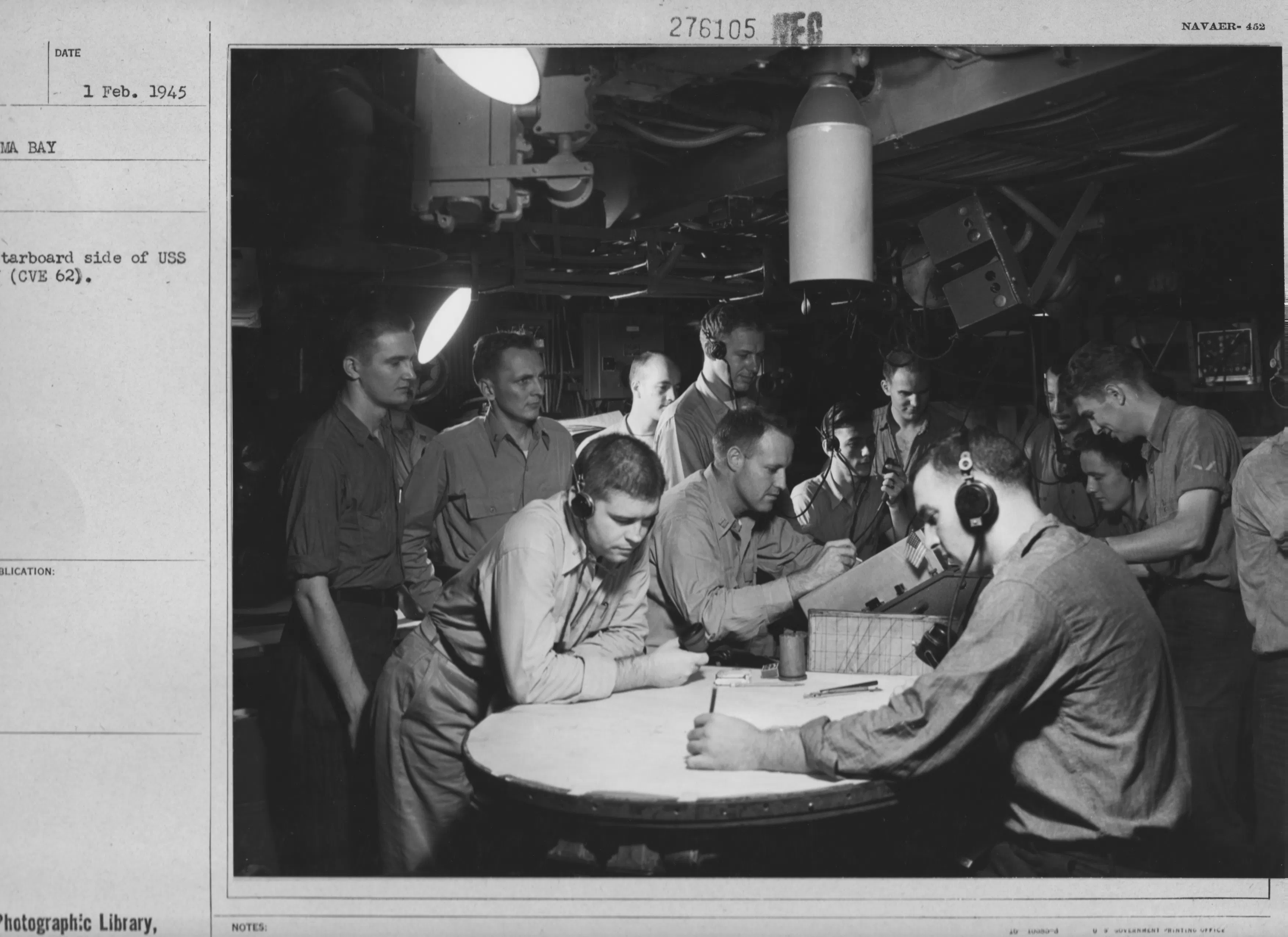

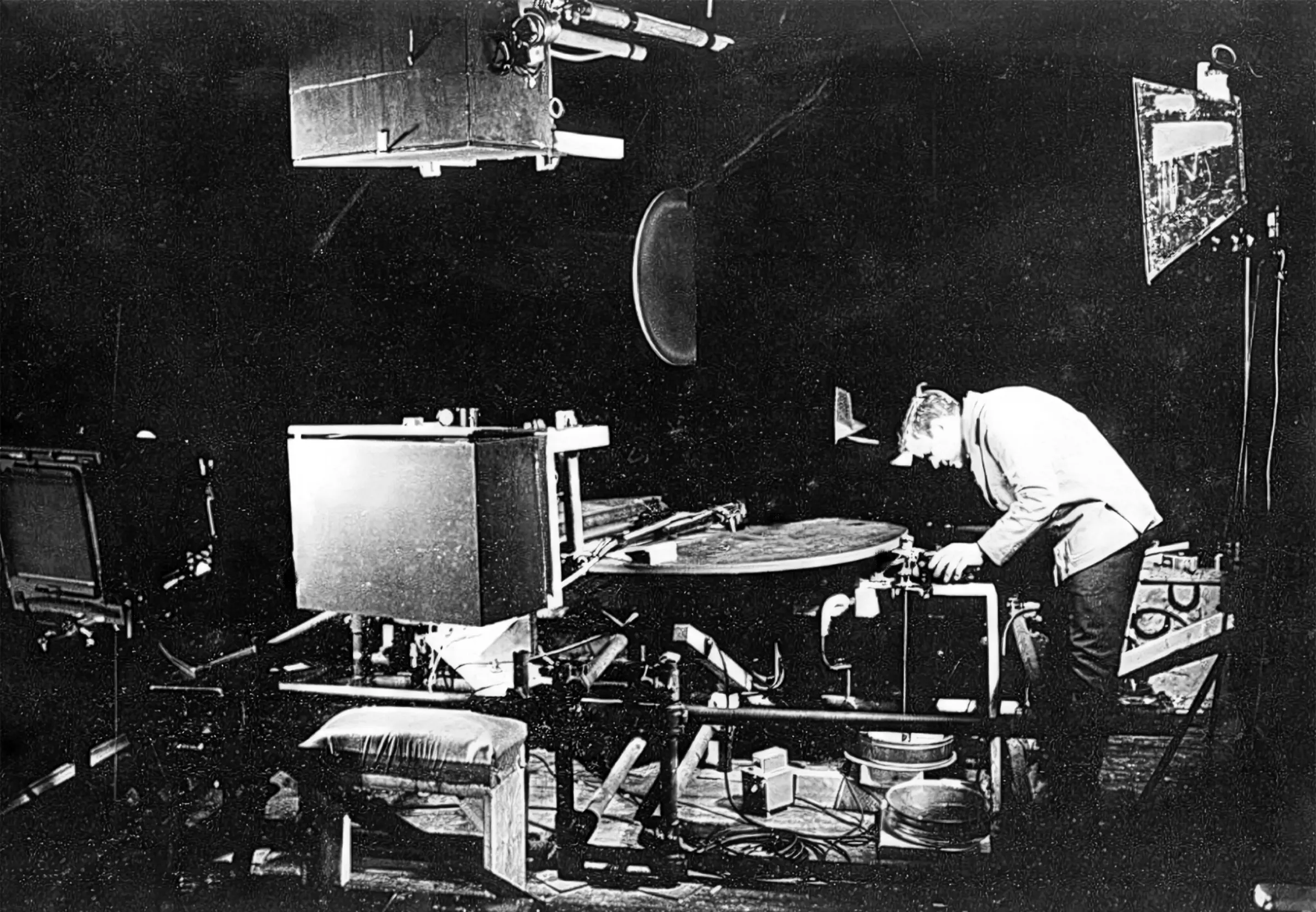

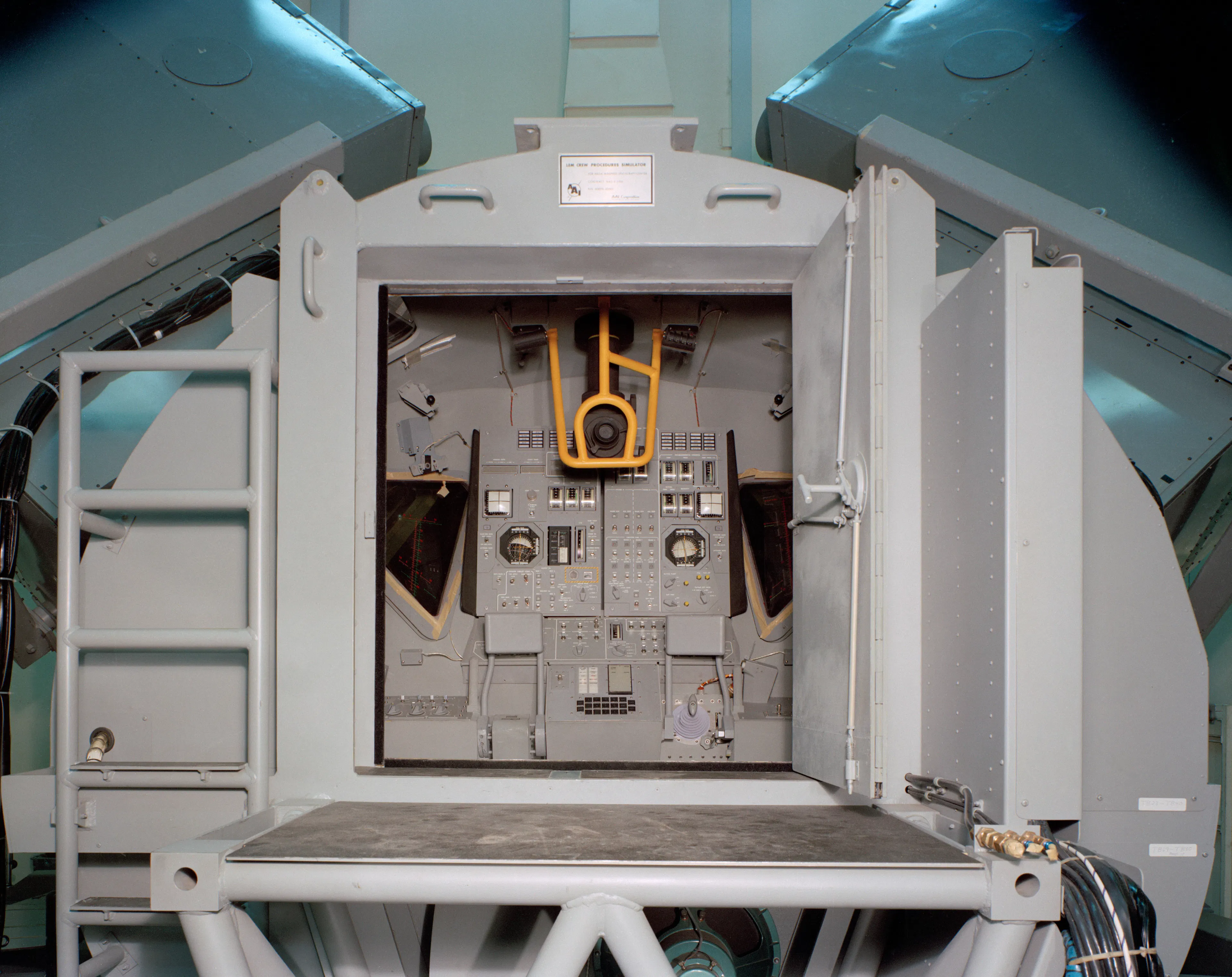

Each SAGE installation had at its heart an expanded version of Forrester’s Whirlwind computer, constructed by IBM: the AN/FSQ-7. This machine was the world’s first large and reliable computer capable of real-time calculation. Holding nearly 50,000 vacuum tubes alongside its thousands of tiny memory cores, it weighed almost 300 tons. Surrounding, servicing, and interfacing with the rack-mounted components of this “mainframe” were hundreds of smaller, connected “console” computers, developed at the Rad Lab’s new home in Lincoln, MA. At each console, human operators would identify targets on a cathode-ray screen with a light gun—just as their wartime predecessors had “pip-matched” in the SCR-584. However, while the “pips” of the radar system were signals directly coming from a microwave receiver, the signals on the screen of the SAGE console were only an abstracted interface, created by the computer from the interpretation of data from across multiple radar installations.

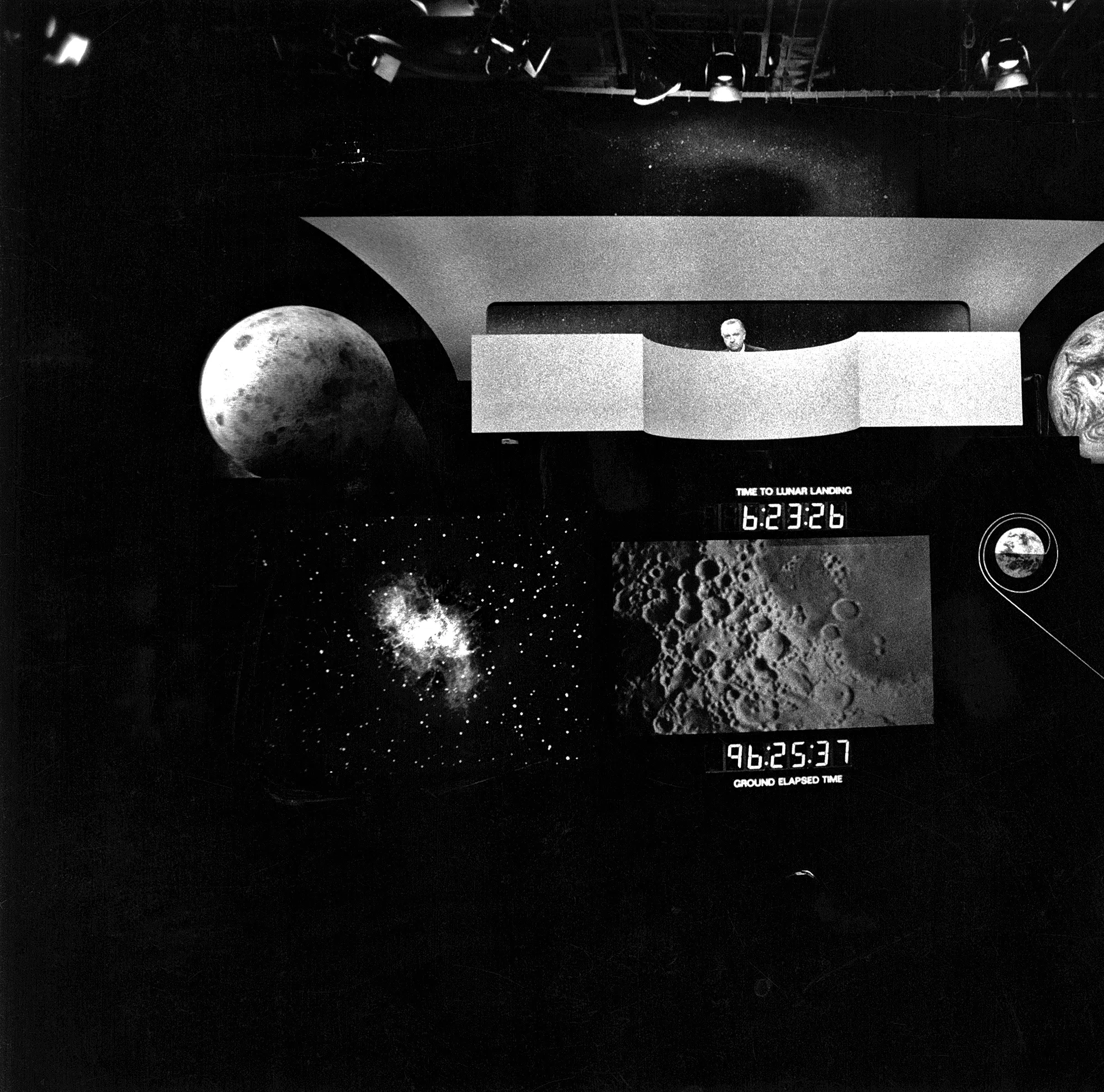

Here, on the SAGE console screen, the ghostly perception of wartime radar gave way to a fully digital phantom—albeit one that represented the most devastating and un-ephemeral prospects of nuclear violence. The system reduced all the complex movements of a conflict to a string of sixteen binary digits—electronic fragments—which could be manipulated, displayed, and selected for action on screens inside a massive, windowless interior. Quintessentially, this meant that nuclear attacks were constantly simulated for training, indistinguishable from actual Armageddon. The resulting toll on SAGE’s young Air Force operators was recorded in graffiti visible on surviving consoles: “Don’t you feel useless?” asks one. “I can’t stand it any longer,” concludes another.

The last movie Douglas Trumbull directed, 1983’s Brainstorm, depicts a relevant scenario: a simulation technology so comprehensive it can record and depict the entire human sensorium. When, like Silent Running (discussed below), the film was a commercial failure, Trumbull found a new career designing theme-park rides and other simulated entertainments. In this work, he would take particular care that mismatches in the information flowing to human subjects did not cause discomfort, or even alienation from the experience. In the operators of SAGE, however, we see the first inkling of the opposite challenge—the vertigo caused when a simulation cannot be distinguished from the terrifying reality it aims to represent.